META: This is also published on Medium.

“A clever person solves a problem. A wise person avoids it.”

Albert Einstein

Life Is Risky. Learn to Live With It.

I can’t get anywhere, if I don’t take risks, but I won’t get anywhere if I’m reckless.

I have found that it’s…risky for me to evaluate risk without some kind of mental framework.

In this essay, I’ll give a brief overview of my approach to managing risk.

I‘m Terrible at Evaluating Risk

When it comes to evaluating risk, the human brain is a remarkably flawed instrument.

Many studies have been made as to how we perceive risk, and how it affects decision-making ([01] — [12], below).

Here’s a rather entertaining take on it. [13]

TL;DR. We suck at risk. We need help.

Below is a pithy, off-the-cuff, not-scientific mental exercise that I use to reduce the bias in thinking about risk, and evaluating ways to deal with it.

This is NOT a full-scale risk analysis exercise! In fact, it’s likely to make actuaries fall to the floor, foaming at the mouth.

The Three Factors of Risk Management

When I look at risk management, I analyze the risk, then plan how to approach the risk by developing approaches for Prevention, Mitigation and Remedy.

Prevention

Prevention is taking steps to reduce the possibility of the risk manifesting.

For example, if I’m going to be using a bucket to hold some liquid, and have some concerns about that liquid spilling and causing damage, a possible prevention strategy could be to use a large bucket, and not fill it too much; thus reducing the likelihood of the liquid splashing over the walls, and also giving a lower center of gravity.

I might also place the bucket somewhere where it won’t get kicked over.

Mitigation

Mitigation is taking steps to reduce the consequences of the risk manifesting.

In the above bucket of liquid example, a possible mitigation strategy would be to place the bucket on a surface unlikely to be damaged by the liquid, if it should spill.

Remedy

Remedy is taking steps to deal with the fallout, if a risk should manifest.

In the above example, having a mop and bucket nearby could be a remedy.

Scoring the Risk

Before I get to managing the risk, however, I need to measure it. Some risks aren’t worth worrying about, while others could be existential threats.

The Two Axes of Risk Measurement

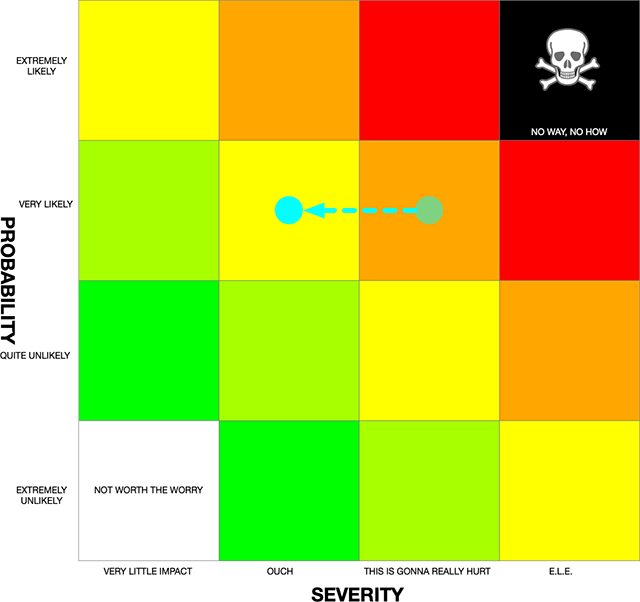

When I measure a risk, I have two axes that I use to “score” the risk: Probability, and Severity.

Probability

Probability is the likelihood of a risk manifesting.

In the above example, having the bucket out in a high-traffic area means that the likelihood of a spill is higher than if it were placed in an out-of-the-way corner.

I address probability by working on prevention.

Severity

Severity is a measure of the consequences of a risk manifesting.

In the above example, if the bucket is filled with distilled water, and it spills, it’s likely to do a lot less damage than if it were filled with ClF3 [14].

I address severity by working on mitigation.

A Quick “Back of the Envelope” Method

That’s all fine and good, but what do buckets have to do with software? Where are the numbers? If geeks can’t express ourselves in numbers, we just go all to pieces…

Prepare to go to pieces, then. I don’t use numbers.

This isn’t actuarial science. A lot of my programming methodology comes from experience and “gut instinct.” If I can avoid going down a rabbit-hole, I will.

This is a quick way to “score” a risk, without getting too “science-y.”

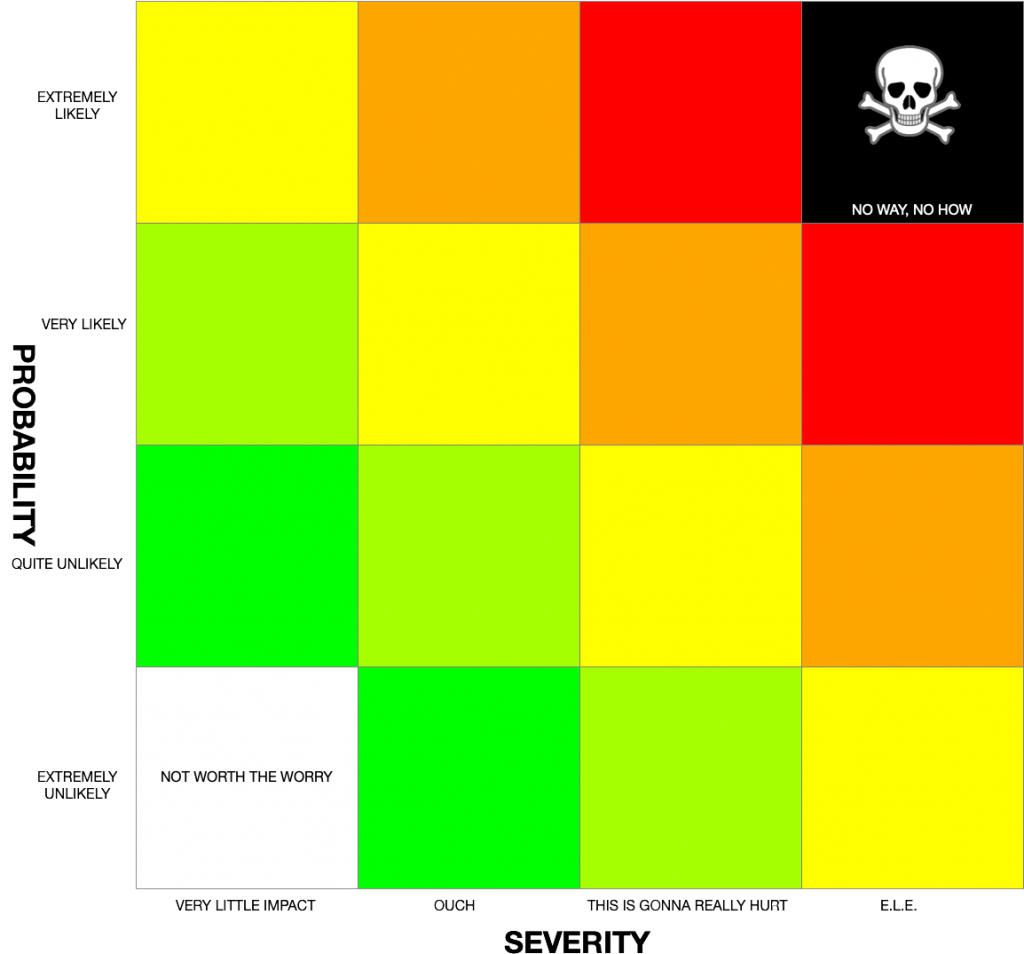

In the above chart, I look at each risk with very broad “gut” assumptions; examining the risk empirically.

I’ve found that if I split the risk into its two components, and evaluate each one separately, it helps me to be a great deal more objective. The important part of this exercise is divorcing probability from severity. If you look at the list of cognitive fallacies below ([01]–[12]), you will see that, when we perceive risk, we tend to ignore one axis for the other (either thinking a very severe risk could manifest more frequently than reality shows, or a milder risk will not manifest as frequently as it actually does).

I evaluate for probability first. How likely is it that risk will manifest? For example, if I’m designing a device driver that uses wireless connectivity, and the risk I’m examining, is that commands will fail to reach the device, then how likely is it that the commands will fail to reach their destination?

In a lab, this may be “Extremely Unlikely.” However, in the field, the probability may rise to “Very Likely,” or even “Extremely Likely.” This may be dependent upon things like distance of the device from the controller, or the presence of a lot of metal.

Next, I evaluate for severity. What are the consequences of the risk manifesting? If the device is a coffeemaker, then failed commands may result in nothing more than cold coffee. However, if it’s a robot assembly controller, the consequences could be an industrial accident; with all the fun stuff therein.

In Chart 1, the X-axis is severity, and the Y-axis is probability.

“E.L.E.” stands for “Extinction-Level Event.”

When scoring a risk, I use the two axes to determine where the risk lands.

If it is red, then I shouldn’t take the risk, unless I can come up with a very good remedy.

If it is yellow or orange, then I should definitely consider prevention, mitigation, and remedy. I should not ignore the risk.

If green, then, if it’s not too much trouble, I can do some work, but the world won’t end if I don’t do it.

White means don’t bother. Black means don’t take the risk.

If I use prevention and mitigation (remedy is for after a risk manifests), I may be able to move the risk into “greener pastures,” so to speak.

An Example

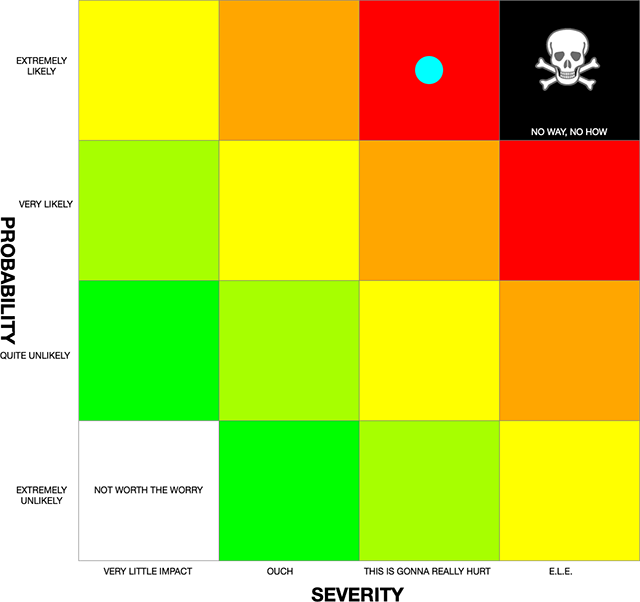

In Chart 2, let’s say that I have my device driver, and it controls a robot arm in a factory.

Probability

Because of the presence of metal, and the fact that the person that controls the robot has a duty station that requires them to walk around the floor, the probability of interference with commands is “Extremely Likely.”Additionally, the robot’s programming was designed for constant human monitoring (for safety), so the user needs to interact with the robot frequently. This further adds to the likelihood of communication failure.

Severity

Because this robot is one that moves large pieces of fairly raw steel in an environment of mixed robots and humans, the severity of a communication failure could be fairly high. I call the severity “This Is Gonna Really Hurt.”

Ugh

That lands us in the upper red zone. Not good.

What to do?

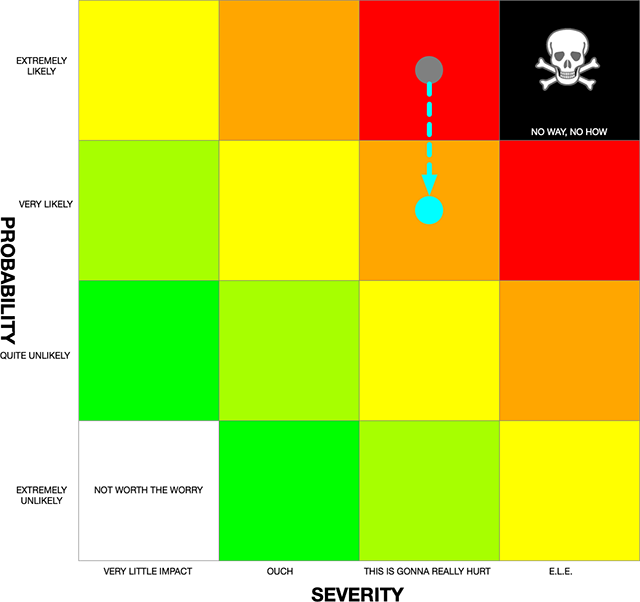

Reduce the Probability

The first thing that I’d do, is find a way to reduce the probability of a problem. This could be done in a few ways:

- I could implement a policy that requires the user to be within 10 feet of the machine every half hour (or however long the requirement is between checks).

- I could replace a lot of the metal around the machine with composites or wood.

- I could design a subsystem of the robot program that allows more autonomy, lessening the requirement for human intervention.

Let’s say that if I implemented each of these suggestions, I could reduce the probability all the way down to “Quite Unlikely.”

However, the last two are extremely expensive, so I might decide to try to get away with just #1 (user gets up close and personal with the machine). Let’s say that reduces the likelihood to “Very Likely.” Not great, but better than “Extremely Likely.”

Reduce the Severity

The next thing that I examine is severity. Right now, it’s pretty bad. Let’s see if I can come up with some ideas to reduce the severity:

- I could put up shielding around the device, so if anything flies off, it won’t get far. This would need to be high-impact plastic, to avoid radio interference.

- I could remove the human workers from the area, relying only on robots.

- I could design a subsystem of the robot program that immediately stops everything if there is even the slightest deviation from the plan.

If I implemented all of these, I could probably reduce the severity to “Very Little Impact,” but, as above, they would come with costs. Let’s see if I can get down to “Ouch.”

#1 sounds like the best idea, but it has a couple of issues:

Even plastic will cause some interference. Since I’m not doing anything about making the radio stronger (like replacing all the metal with other materials), then that could cause issues.

Also, even if nothing else gets damaged, the damage to the robot, itself, would be severe, as it would now be enclosed in a shell that could reflect shrapnel back into the device.

#2 would be extremely expensive, and could cost people their jobs.

Looks like I have to bite the bullet, and work on an upgrade to the software. This won’t be a simple project, but it is the only one that fits within my budget. If I do this, no one needs to lose their job, and the machine is less likely to suffer damage.

If I do both of these, we could move the risk into yellow territory. Still not wonderful, but I’ll take what I can.

The above shows a fairly concrete example of how I use this rather “naive” methodology to evaluate and reduce risk.

CITATIONS

[01] Risk Perception in Psychology and Economics, By Kenneth J. Arrow (1982). NOTE: Not Free.

[02] Perception of Risk Posed by Extreme Events, By Paul Slovic and Elke U. Weber (2013)

[03] Risk Perceptions and Risk Characteristics, By Hy-Jin Paek and Thomas Hove (2017)

[04] Availability Heuristic (Wikipedia)

[05] Clustering Illusion (Wikipedia)

[06] Confirmation Bias (Wikipedia)

[07] Neglect of Probability (Wikipedia)

[09] Risk Compensation (Wikipedia)

[10] Survivorship Bias (Wikipedia)

[11] Zero-Risk Bias (Wikipedia)

[12] Heck, Here’s the Whole Freaking List (Wikipedia)

[13] Five Logical Fallacies That Make You Wrong More Than You Think (Cracked.com –2011)